As artificial intelligence applications increasingly rely on high-dimensional data such as text, images, and audio, the need for efficient storage and retrieval of vector embeddings has become critical. Traditional relational databases struggle with similarity search at scale, leading to the rise of vector databases purpose-built for handling embeddings. Tools like Pinecone have emerged as powerful infrastructure components that enable fast, scalable, and production-ready semantic search. Understanding how these tools work and how they compare is essential for teams building modern AI systems.

TLDR: Vector database tools like Pinecone are designed to store and retrieve high-dimensional embeddings efficiently for AI applications. They enable fast similarity search, real-time updates, and scalable infrastructure for semantic search, recommendation systems, and generative AI. Compared to traditional databases, vector databases use specialized indexing methods optimized for nearest neighbor search. Evaluating features like scalability, latency, filtering support, and deployment flexibility helps organizations choose the right solution.

What Are Vector Embeddings?

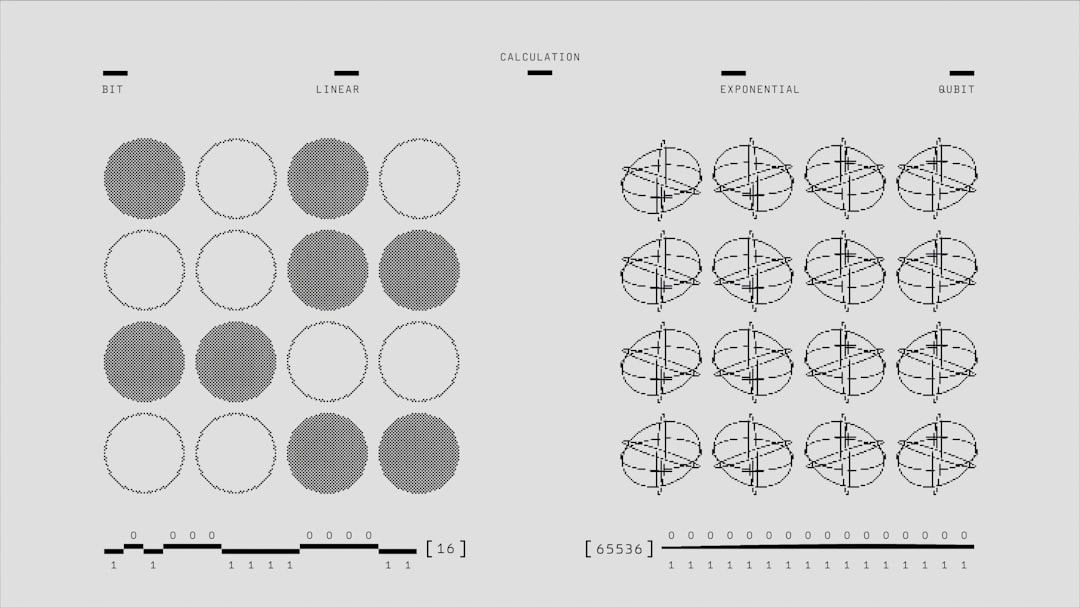

Vector embeddings are numerical representations of data such as words, sentences, images, or user behavior. Created using machine learning models, embeddings translate complex information into dense numerical vectors that capture meaning and relationships.

For example:

- Text embeddings map sentences with similar meanings closer together in vector space.

- Image embeddings group visually similar images together.

- User embeddings help recommendation engines find similar behavior patterns.

The challenge lies not in generating embeddings, but in storing and querying millions—or even billions—of them efficiently.

Why Traditional Databases Fall Short

Traditional SQL and NoSQL databases are optimized for exact match queries and structured data retrieval. However, similarity search requires:

- Nearest neighbor search rather than exact matching

- Efficient indexing in high-dimensional space

- Low-latency retrieval across massive datasets

Performing vector similarity using conventional database scans is computationally expensive and slow. This is where vector databases shine. They use advanced indexing algorithms like Approximate Nearest Neighbor (ANN) methods, including HNSW and IVF, to dramatically reduce search time while maintaining high accuracy.

Pinecone: A Leading Vector Database Solution

Pinecone is one of the most widely adopted managed vector database services. Built specifically for embedding storage and similarity search, it abstracts away infrastructure complexity and provides a highly scalable solution.

Key Features of Pinecone

- Fully managed service: No cluster maintenance required

- High scalability: Handles billions of vectors

- Low-latency search: Optimized for real-time applications

- Metadata filtering: Combines vector search with structured filtering

- Automatic scaling: Adjusts compute resources as needed

Pinecone is commonly used in:

- Semantic search applications

- Retrieval-Augmented Generation (RAG) systems

- Personalized recommendations

- Anomaly detection

- Chatbot memory systems

Its developer-friendly API and cloud-native architecture make it especially appealing for startups and enterprises building AI-driven applications.

Other Popular Vector Database Tools

While Pinecone is a major player, several other vector databases offer strong capabilities. Each comes with distinct trade-offs regarding deployment flexibility, cost, and performance.

1. Weaviate

Weaviate is an open-source vector database that supports hybrid search (combining keyword and vector search). It integrates easily with ML models and offers GraphQL APIs. Its modular design allows extensibility with additional ML modules.

2. Milvus

Milvus is a high-performance open-source vector database designed for scalability. It supports multiple ANN indexing algorithms and is well-suited for large-scale deployments with billions of embeddings.

3. Qdrant

Qdrant is optimized for filtering and real-time updates. It emphasizes production-grade filtering capabilities and ease of deployment, available both as open source and managed service.

4. Redis with Vector Search

Redis has introduced vector similarity search capabilities through its modules. While traditionally an in-memory store, it now supports embedding storage combined with ultra-fast retrieval.

Image not found in postmetaComparison Chart: Leading Vector Database Tools

| Feature | Pinecone | Weaviate | Milvus | Qdrant | Redis Vector |

|---|---|---|---|---|---|

| Deployment | Fully managed cloud | Open source + cloud | Open source + cloud | Open source + cloud | Self-managed + cloud |

| Scalability | Very high | High | Very high | High | Moderate to high |

| Indexing Methods | Proprietary ANN | HNSW | IVF, HNSW, more | HNSW | HNSW, Flat |

| Metadata Filtering | Strong support | Strong | Supported | Advanced filtering | Supported |

| Ease of Use | Very beginner-friendly | Moderate | Advanced setup | Developer-friendly | Requires Redis knowledge |

| Best For | Enterprise AI apps | Hybrid search systems | Massive scale workloads | Filter-heavy use cases | Low-latency applications |

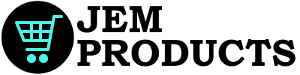

How Vector Databases Optimize Similarity Search

Vector databases use specialized indexing structures to make similarity search efficient. Instead of comparing every vector against every other vector, they organize data into navigable graph structures or cluster partitions.

Some common optimization techniques include:

- Hierarchical Navigable Small Worlds (HNSW): Graph-based structure that navigates nearest neighbors efficiently.

- Inverted File Index (IVF): Clustering-based partitioning for faster lookups.

- Product Quantization: Compresses vectors to reduce memory footprint.

- Sharding: Distributes vectors across nodes for horizontal scaling.

These approaches dramatically reduce computational complexity, turning what would otherwise be an O(n) retrieval into something far more practical for production environments.

Key Considerations When Choosing a Vector Database

Selecting the right tool depends on project scale and requirements. Important evaluation factors include:

- Latency requirements: Real-time recommendation systems demand millisecond response times.

- Data size: Millions vs. billions of embeddings require different scaling capabilities.

- Filtering needs: Applications often combine vector similarity with structured metadata constraints.

- Deployment preference: Managed services reduce operational burden.

- Cost structure: Compute-based pricing versus self-hosted infrastructure costs.

For organizations without DevOps resources, managed solutions like Pinecone offer considerable advantages. For teams seeking full control and customization, open-source systems like Milvus may be more appropriate.

Use Cases Driving Adoption

Vector databases are at the core of many modern AI applications:

- Generative AI and RAG: Enhancing language model outputs with external knowledge retrieval.

- E-commerce search: Semantic product discovery beyond keyword matching.

- Fraud detection: Identifying similar behavior patterns in transaction data.

- Image search: Finding visually similar assets in media libraries.

- Customer support automation: Matching user queries with relevant documentation.

The rapid adoption of large language models has further accelerated demand for high-performance vector storage infrastructure.

The Future of Vector Databases

As AI systems become more integrated into business workflows, vector databases are evolving rapidly. Trends include:

- Deeper integration with LLM frameworks

- Native hybrid search combining structured and semantic queries

- Improved compression techniques to reduce infrastructure costs

- Increased support for multi-modal embeddings (text, image, audio)

Rather than serving as niche tools, vector databases are becoming foundational elements of AI architecture—much like relational databases became essential to web applications in the early 2000s.

Frequently Asked Questions (FAQ)

1. What is a vector database?

A vector database is a specialized system designed to store, index, and query high-dimensional vector embeddings efficiently, primarily for similarity search applications.

2. How is Pinecone different from traditional databases?

Pinecone is optimized for approximate nearest neighbor search in high-dimensional space, whereas traditional databases focus on exact matches and structured queries.

3. Are vector databases only useful for AI applications?

They are primarily used in AI-driven systems such as search, recommendations, and generative AI, but any application requiring similarity matching can benefit.

4. What is Approximate Nearest Neighbor (ANN) search?

ANN is a technique that retrieves highly similar vectors with significantly reduced computational cost compared to exact nearest neighbor search, trading minimal accuracy for major speed gains.

5. Can vector databases scale to billions of embeddings?

Yes. Tools like Pinecone and Milvus are specifically designed for horizontal scalability and can handle billions of vectors with distributed architectures.

6. Is it better to use open-source or managed vector databases?

It depends on infrastructure expertise and customization needs. Managed services offer simplicity and reduced operational effort, while open-source solutions provide greater flexibility and control.

7. Do vector databases support filtering with metadata?

Yes, most modern vector databases allow combining similarity search with metadata filtering to refine results based on structured attributes.

Vector database tools like Pinecone represent a significant evolution in data infrastructure, enabling intelligent systems to operate efficiently at scale. As semantic applications continue to grow, their role in modern software architecture will only become more central.