As large language models (LLMs) rapidly become embedded in products, workflows, and decision-making systems, the question shifts from “Can it generate text?” to “Can we trust what it generates?” Model evaluation has emerged as one of the most critical disciplines in applied AI. Tools like LangSmith are leading the charge by helping developers trace, test, and systematically evaluate model outputs. Without structured evaluation, even the most sophisticated LLM applications risk being unreliable, inconsistent, or misaligned with user expectations.

TLDR: LLM evaluation tools like LangSmith help teams test, trace, and improve AI-generated outputs in a structured and repeatable way. They enable systematic debugging, dataset-driven experimentation, and automated scoring of model responses. As LLM applications grow in complexity, evaluation platforms become essential for ensuring reliability, safety, and performance. In short, evaluation is no longer optional—it’s a core pillar of building production-grade AI systems.

In this article, we’ll explore why LLM evaluation matters, how platforms like LangSmith work, the types of evaluation strategies they enable, and how teams can integrate them into real-world AI development.

Why LLM Evaluation Is Different From Traditional Testing

Traditional software testing is built around deterministic outputs. Given the same input, a function should always return the same result. LLMs, however, are probabilistic systems. Their outputs can vary depending on temperature settings, prompt phrasing, context windows, and even subtle model updates.

This introduces several unique challenges:

- Non-determinism: The same prompt can produce slightly (or significantly) different responses.

- Ambiguity: Multiple outputs may be correct but vary in tone or detail.

- Subjective quality: What defines a “good” answer can depend on context.

- Prompt sensitivity: Minor phrasing changes can alter outcomes dramatically.

Because of this, conventional pass/fail assertions often fall short. Instead, LLM systems require a blend of qualitative and quantitative evaluation strategies. That’s where tools like LangSmith come in.

What Is LangSmith?

LangSmith is an observability and evaluation platform designed specifically for LLM-powered applications. It provides developers with visibility into how prompts are processed, how chains of calls interact, and how outputs perform across datasets.

At its core, LangSmith focuses on three pillars:

- Tracing – Recording step-by-step execution of LLM calls and chains.

- Dataset Evaluation – Testing models against structured example sets.

- Experimentation – Comparing prompts, models, or configurations side by side.

Rather than manually inspecting outputs one by one, developers can systematically analyze performance across hundreds or thousands of test cases.

The Role of Tracing in Debugging

When something goes wrong in an LLM application, it’s rarely obvious where the issue originates. Is the system message unclear? Is the retrieval component returning irrelevant context? Did the temperature setting introduce variability?

Tracing tools provide visibility into:

- Prompt inputs and outputs

- Intermediate reasoning steps

- Retrieval queries and returned documents

- Tool calls within agent systems

- Token usage and latency metrics

This is particularly important for applications built with chains or agents, where multiple steps depend on each other. A failure in one step cascades into failed responses downstream.

Instead of guessing, developers can inspect execution paths visually, identify bottlenecks, and iterate with confidence.

Dataset-Driven Evaluation

One of the most powerful features of LLM evaluation platforms is the ability to create structured datasets for consistent testing.

A dataset typically contains:

- An input prompt

- Optional reference context

- An expected output (or scoring guideline)

- Metadata tags for filtering

With this setup, teams can:

- Measure regression after prompt changes

- Compare two different model versions

- Evaluate variability at different temperature settings

- Test domain-specific accuracy at scale

For example, if you build a legal document assistant, you might construct a dataset of contract analysis scenarios. Each change to your prompt template can then be tested against the entire dataset to detect performance improvements or declines.

Human vs. Automated Evaluation

Evaluation strategies typically fall into two categories: human review and automated scoring. Both play important roles.

Human Evaluation

Human reviewers judge responses for:

- Accuracy

- Clarity

- Completeness

- Tone alignment

- Policy compliance

This approach captures nuance that automated metrics often miss. However, it can be expensive and time-consuming.

Automated Evaluation

Automated methods include:

- String similarity metrics (e.g., exact match, F1)

- Embedding similarity scoring

- LLM-as-judge evaluations (using another model to score outputs)

- Rule-based checks for required components

LangSmith and similar platforms make it easier to define evaluators and run them automatically across datasets. While automated evaluation can’t fully replace human review, it enables rapid iteration cycles.

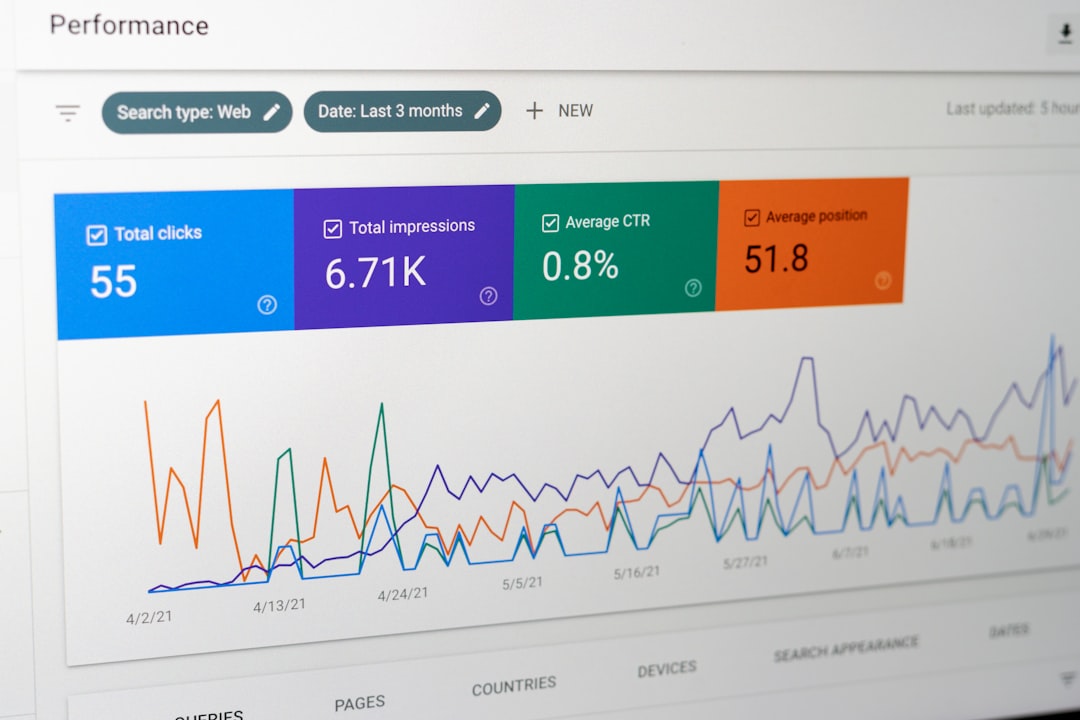

Experimentation and A/B Testing

One of the most practical uses of evaluation tools is experimentation. LLM performance is heavily influenced by prompt wording, system instructions, and model selection.

Consider these common variables:

- Changing from GPT-3.5 to GPT-4 class models

- Adjusting temperature from 0.2 to 0.7

- Rewriting system messages

- Adding structured output formatting instructions

- Increasing retrieval context window size

Without structured evaluation, comparing these changes becomes anecdotal. With experiment tracking, businesses can quantify improvements.

For instance, teams might measure:

- Response relevance score improvement

- Reduction in hallucination frequency

- Latency differences

- Token cost efficiency

This data-driven experimentation shifts LLM development from guesswork to engineering discipline.

Evaluating RAG Systems

Retrieval-Augmented Generation (RAG) systems introduce additional complexity. Now, evaluation must account for:

- Retrieval accuracy

- Document grounding

- Citation correctness

- Context utilization

An answer might technically sound plausible but fail to use the most relevant retrieved content. Evaluation platforms help analyze both the retrieval stage and the generation stage.

Key metrics for RAG evaluation include:

- Context Precision: Are retrieved documents relevant?

- Answer Faithfulness: Does the output align with retrieved content?

- Groundedness: Does the model avoid fabricating unsupported claims?

By instrumenting these stages, developers can pinpoint whether failures originate from search quality or language generation.

Reducing Hallucinations and Risk

One of the most discussed challenges in LLM applications is hallucination—confidently generated but incorrect information. Evaluation tools help reduce hallucinations by:

- Systematically testing factual scenarios

- Comparing outputs against verified reference answers

- Tracking hallucination rates over time

- Flagging unsupported claims

For regulated industries like healthcare, finance, and law, this is not merely a quality issue—it’s a compliance requirement.

Continuous evaluation also helps detect drift when models are updated or when prompt changes introduce unintended behaviors. Treating evaluation as a continuous monitoring process, rather than a one-time test, is critical for maintaining trust.

Integrating Evaluation Into Development Workflows

Modern AI teams increasingly integrate evaluation into CI/CD pipelines. This mirrors traditional software testing but adapts for probabilistic systems.

A strong workflow might include:

- Building a curated evaluation dataset.

- Defining automated evaluators.

- Running evaluations on every prompt or model change.

- Monitoring regression metrics.

- Triggering human review for flagged cases.

By embedding evaluation early, organizations reduce the risk of shipping degraded experiences into production.

The Broader Landscape of LLM Evaluation Tools

While LangSmith is a prominent platform, the ecosystem of evaluation tools is rapidly expanding. Many platforms focus on:

- Observability and tracing

- Human annotation workflows

- Benchmarking and leaderboard comparisons

- Security and adversarial testing

- Bias detection and fairness analysis

This diversity reflects an evolving understanding: LLM evaluation is multidimensional. Technical accuracy is only part of the equation. Tone, fairness, safety, and brand alignment also matter.

The Future of LLM Evaluation

As models grow more capable, evaluation will become more sophisticated. Emerging trends include:

- Self-evaluating agents that critique and refine their own outputs

- Dynamic benchmarking tailored to specific business objectives

- Custom judge models trained on domain-specific criteria

- Real-time user feedback loops feeding into retraining pipelines

In many ways, evaluation may become as complex as model development itself. Organizations that build strong evaluation frameworks today will have a competitive edge tomorrow.

Conclusion

LLM evaluation tools like LangSmith represent a crucial evolution in AI development. As applications move from demos to mission-critical systems, reliability and accountability become non-negotiable. Tracing tools expose hidden execution flows, dataset-driven testing ensures consistency, and structured experimentation turns intuition into measurable improvement.

The era of “prompt and hope” is ending. In its place, disciplined evaluation methodologies are emerging—bringing rigor to generative AI engineering. For teams serious about deploying language models at scale, investing in evaluation infrastructure isn’t optional. It’s foundational.

Ultimately, the most powerful models aren’t the ones that generate the flashiest outputs—they’re the ones that consistently deliver correct, aligned, and trustworthy results. And that consistency is built through systematic evaluation.