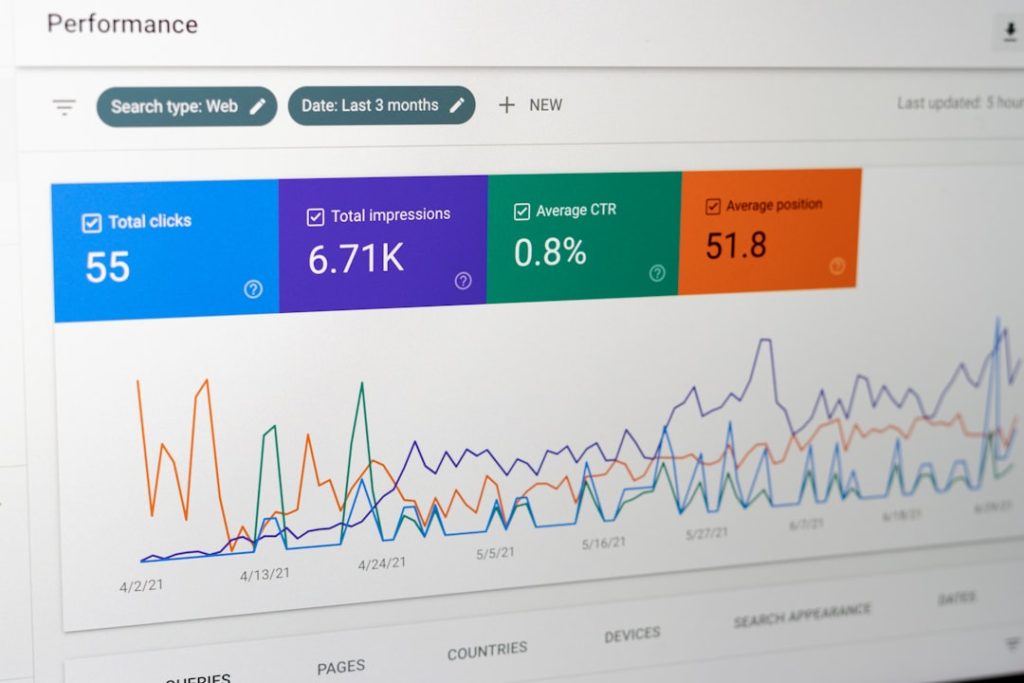

AI models are smart. But they are not magic. Once you deploy them into the real world, things can go wrong. Data changes. Users behave differently. Inputs get messy. That’s why AI model monitoring matters so much. It helps you track performance, catch issues early, and keep your models healthy over time.

TLDR: AI models need constant monitoring after deployment. Platforms like WhyLabs help track data drift, model accuracy, and performance issues. In this article, we explore six powerful alternatives that make model monitoring easier and smarter. Each tool has its own strengths, so the best choice depends on your team and goals.

Think of model monitoring like a fitness tracker for your AI. It watches everything. It alerts you when something looks off. And it helps you improve over time.

Let’s explore six AI model monitoring platforms similar to WhyLabs that can help you stay in control.

1. Arize AI

Arize AI is one of the most popular model monitoring tools out there. It focuses on observability for machine learning systems. That means it helps you understand what your model is doing and why.

Arize is known for being beginner-friendly. The dashboards are clean. The insights are clear. You don’t need to dig through messy logs.

Key features:

- Performance monitoring for classification, regression, and ranking models

- Drift detection for data and predictions

- Root cause analysis tools

- Real-time and batch monitoring

One cool feature is its ability to compare training and production data side by side. If your model suddenly acts weird, you can quickly see why.

Arize works well for mid-size to large teams. If you want deep visibility into your ML system, it’s a strong choice.

2. Fiddler AI

Fiddler AI focuses on explainability and trust. It helps teams understand not just what their models predict, but why.

This is very important in industries like finance or healthcare. People need explanations. Regulators demand transparency.

What makes Fiddler special?

- Model explainability tools

- Bias detection and fairness analysis

- Data drift monitoring

- Monitoring for NLP and tabular models

Fiddler gives visual breakdowns of feature importance. You can see which inputs are driving predictions. That makes debugging much easier.

If your team cares deeply about ethical AI, Fiddler is a strong alternative to WhyLabs.

3. Evidently AI

Evidently AI is loved by developers. It started as an open-source tool. That means developers can customize and extend it.

It focuses heavily on data quality and drift detection. Simple. Clear. Practical.

Why do teams like it?

- Open-source flexibility

- Easy integration into ML pipelines

- Pre-built monitoring reports

- Strong community support

You can generate detailed reports with visualizations in just a few lines of code. That’s powerful.

Evidently is a great option for startups and technical teams who want control. If you prefer building your own monitoring stack, this tool gives you freedom.

4. Superwise

Superwise focuses on proactive monitoring. It doesn’t just show dashboards. It sends alerts when things break.

Imagine launching a model at night. Suddenly, prediction accuracy drops. Superwise alerts your team before customers complain. That’s powerful.

Main strengths:

- Automatic anomaly detection

- Customizable alerts

- Performance degradation tracking

- Clear visualization tools

It supports structured and unstructured data. That means it works for many industries.

Superwise is ideal for companies that rely heavily on production AI systems. It acts like a security guard for your model.

5. Domino Data Lab

Domino Data Lab is more than just a monitoring tool. It’s a full MLOps platform. Monitoring is one part of a bigger ecosystem.

Domino helps teams deploy, manage, govern, and monitor models in one place.

Why consider Domino?

- Enterprise-grade governance

- Full ML lifecycle support

- Collaboration tools for teams

- Model tracking and reproducibility

If you work in a large organization, Domino can centralize everything. It’s structured. Controlled. Secure.

It may feel heavy for small teams. But for enterprises, that structure is a benefit, not a burden.

6. Deepchecks

Deepchecks is all about validation and testing. It helps you check your model before and after deployment.

Think of it like quality control for AI.

Deepchecks automatically runs tests to find issues like:

- Data integrity problems

- Train-test leakage

- Concept drift

- Performance drops

It works well in CI/CD pipelines. That means you can catch issues before your model even goes live.

Deepchecks is great for teams that love automation and strong safeguards.

What Should You Look For in a Model Monitoring Platform?

All the tools above are powerful. But how do you choose?

Here are simple questions to guide you:

- Do you need real-time monitoring?

- Is explainability important for your industry?

- Do you want open-source flexibility?

- Are you working in a highly regulated environment?

- How big is your team?

Some teams need simple dashboards. Others need deep analytics and governance controls.

There is no single perfect tool. The best platform is the one that fits your workflow.

Why Model Monitoring Is Not Optional

Here’s the truth. Models decay.

Data changes over time. This is called data drift. For example:

- Customer behavior shifts

- Economic conditions change

- New products get introduced

- User inputs become noisy

If you don’t monitor your model, performance silently drops. Revenue can fall. Trust can break. Compliance risks can rise.

Monitoring gives you visibility. Visibility gives you control.

And control keeps your AI useful.

Final Thoughts

AI deployment is not the finish line. It’s the starting line.

Tools like Arize AI, Fiddler, Evidently AI, Superwise, Domino Data Lab, and Deepchecks help you stay ahead of problems. They track performance. They detect drift. They explain predictions. They protect your business.

Some are lightweight and developer-friendly. Others are enterprise-level powerhouses. Each one offers a different flavor of observability.

In the end, AI success is not just about building smart models. It’s about keeping them smart.

And with the right monitoring platform, you can do exactly that.